Why AI Could Make You Fail AMC Clinicals

Read time: 6 minutes

Welcome to the machine age of medical education.

AI can generate cases fast. It can give you endless differentials. It can make you feel productive.

That is exactly the danger.

If you rely on AI to do the heavy lifting, it can train the wrong habits for a high-stakes clinical exam.

Not just weak habits.

Failing habits.

Are you practising to pass, or training yourself to fail faster?

The AMC Clinical OSCE is not a knowledge test. It is a timed performance exam built around safe, effective practice in the Australian healthcare system.

You get two minutes to read.

You get eight minutes to perform.

And the global rating decides the station.

So if your study method weakens your timing, presence, or structure, it does not just slow you down.

It lowers examiner trust.

The clock runs out.

Your station loses shape.

Your global rating drops.

When AMC candidates use AI as their main study tool, the same failure patterns appear again and again:

- They focus on content-first habits instead of human-first nonverbal behaviours.

- They deliver comprehensive information dumps rather than prioritised physical examination or management plans.

- They memorise generic safety standards that drift away from Australian cultural and legal expectations.

- They practise in an untimed environment, entirely losing their eight-minute pacing calibration.

- They miss nuanced patient affect cues, such as anger or hesitation, because chatbots are too cooperative.

- Inevitably, they let AI replace real role-play practice.

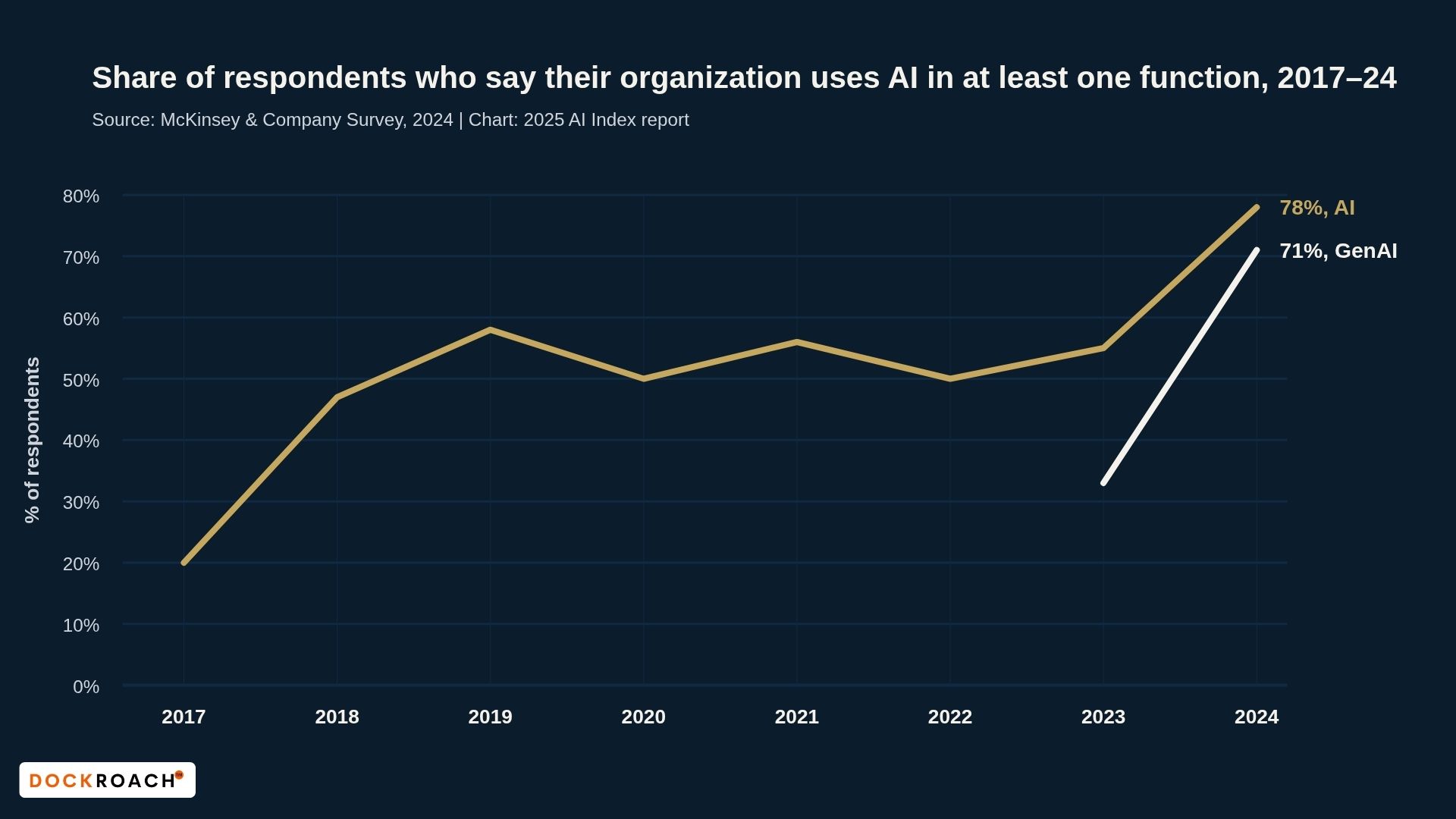

AI is mainstream.

AMC performance is not.

By 2024, generative AI adoption had reached 71% across professional sectors. The tool is everywhere. But as AI becomes operationally normal, a serious pattern is emerging: AMC candidates are starting to replace the necessary friction of live clinical role-play with the artificial comfort of a screen.

Four Ways AI Backfires in AMC Clinicals:

-

The Distraction of Simulated Empathy

-

The Erosion of Timed Role-Play

-

Information Overload

-

Generic Feedback

1. The Distraction of Simulated Empathy

AI can imitate empathy in text. It cannot replicate the messy human signals that test your true clinician presence.

Practising only with AI removes the messiness of real human interaction.

A language model gives you neat, cooperative dialogue. That means you undertrain on anger, resistance, hesitation, and emotional mismatch.

The result is simple: a weaker approach to the patient and a lower examiner impression of your clinician presence.

The Empathy Gap: AI vs. Reality

The AI Role-Play (Zero Friction)

-

AI Patient: "Doctor, could you please help me with my symptoms?"

-

Easy opening.

-

Cooperative tone.

-

Low emotional friction.

-

Fast entry into questions.

The Human Role-Play (High EQ)

-

Simulated Patient: "Why are you bothering me? I’ve already told the nurses I want to go home, so why should I even be here?"

-

Harder opening.

-

Emotion before information.

-

Requires empathy and de-escalation.

-

You must manage the person before the checklist.

Track your practice: aim for a minimum of five in-person role-plays per week to maintain human rapport.

2. The Erosion of Timed Role-Play

Timed role-play builds what untimed screen practice cannot: pace, physical fluency, and calm control under exam pressure.

AI interactions are often untimed and non-punitive.

You sit at a screen, chatting back-and-forth, with zero physical embodiment.

The AMC exam demands environmental fidelity: moving between stations, managing infection control, and navigating strict audio notifications.

The Timing Gap: Chatting vs Performing

AI Role-Play

AI patient: “I’ve been feeling tired lately.”

Candidate: “Tell me more. How long has it been happening? How is your sleep? Appetite? Mood? Weight? Stress? Work? Family? Exercise?”

Result:

- You keep exploring.

- There is no time penalty.

- There is no forced closure.

- Chatting through conditions and differentials, not building real-world clinical skill.

Timed Station (8 minutes pressure)

Patient: “I’ve been feeling tired lately.”

Candidate: “I’ll ask a few focused questions first, then I’ll explain what I think is going on and what we should do next.”

Result:

- You must structure early.

- You must narrow quickly.

- You must finish cleanly.

AI rewards continuation.

The AMC rewards completion.

3. Information Overload

AI output norms favour comprehensive, exhaustive lists.

When you rehearse this breadth over prioritisation, you experience cognitive overload under the pressure of the eight-minute clock.

You hesitate, freeze mid-station, and ultimately appear unfocused and unsafe.

The Compression Gap: AI Breadth vs. Exam Prioritisation

Patient: “So what do you think is going on, doctor?”

The AI Role-Play (Verbal Overload)

"There are many differentials to consider. We must rule out cardiac causes like myocardial infarction, respiratory issues like pulmonary embolism, gastrointestinal problems like GORD, as well as musculoskeletal strain and psychogenic factors. We need to think broadly."

The Human Role-Play (Structured Compression)

“The three main possibilities I’m considering are a heart-related cause, reflux, and musculoskeletal pain. The first priority is ruling out anything serious. Then I’ll explain what fits best and the next step.”

Know more. Say less.

Do not recite everything you know.

Prioritise what matters most.

4. Generic Feedback

Text-based AI feedback often misses the exact performance qualities that shape the AMC global judgement.

It works like a checklist. It does not fully catch tone, pauses, timing, or clinical warmth.

The Feedback Gap: Checklist Praise vs. Exam-Relevant Coaching

AMC Candidate: “How was that?”

AI feedback:

“You demonstrated empathy, gave a reasonable differential, and provided an appropriate management plan. Consider improving structure and keeping your answer concise.”

Dr Aarons (human coach):

“Your knowledge is there. The problem is your delivery. You spent too long in the middle, you did not respond enough to the patient’s concerns, and your explanation became rushed at the end.

Next time, shorten the middle, check understanding earlier, and protect the final part of the station. When the patient feels heard, your explanation lands better, your timing improves, and the whole station feels calmer and more controlled.”

Measure your debrief time: spend 2–3 minutes after each station reviewing your performance with a human peer or coach.

A chatbot cannot tell you if you feel safe to a patient.

“Under pressure, you do not rise. You fall to your training.”

- Archilochus

If your training is untimed, screen-based, and text-heavy, your exam performance will feel the same:

disconnected,

wordy,

and robotic.

Personal note

When I prepared for the AMC Clinical Exam, I did about 3,000 role-plays across 16 months. That is where my real improvement came from. Not from reading more. Not from collecting more notes. From repetition under pressure.

I now coach my clients the same way. We do live, timed role-play. We train the first minute, the middle, and the close. I interrupt them. I challenge their reasoning. I make them handle emotion, resistance, and poor timing. Then we break the station down properly and rebuild it.

I have watched capable candidates underperform simply because they spent too much of their preparation in front of a screen, asking AI to organise confusing recalls instead of rehearsing out loud with a real person.

This exam does not just test what you know.

It tests what comes out of you under pressure.

You need friction.

You need to fail in practice.

You need someone to interrupt you, challenge your reasoning, and assess your clinical presence.

That is why I train performance, not just knowledge.

Quick recap:

- Human nonverbal cues cannot be learned from a screen.

- Untimed practice destroys your eight-minute clock calibration.

- Over-explaining differentials makes you look unsafe and unstructured.

- Generic feedback misses the Australian clinical nuance required to pass.

- Prioritise live role-play to build true integrated competence.

Stop relying on algorithms to do the work of human clinical practice. The AMC Clinical Accelerator is a complete performance system designed to build your pacing, prioritisation, and local cultural safety through high-fidelity human feedback.

Hope is not a reliable exam strategy. The AMC Examiner Mindset Masterclass is a complete performance system designed to calibrate your clinical appropriateness exactly as the examiner sees it. Secure your passing global rating here.

That's all for today. See you in a fortnight.